SQL Server backup is a page-by-page physical copy of the database files. And, generally, the size of a backup is about the size of the database. However, there are several ways to cut the backup size substantially.

Reducing the backup size has the following benefits:

-

More restore points — The smaller the backup size, the more you can save, and therefore you can make more restore points and choose a more suitable date to restore in case of failure.

-

Less strain on network bandwidth — According to the 3-2-1 rule, you should keep one copy of the data outside your network — the smaller the backup, the lower the bandwidth requirements for your network connection.

-

Accelerating the backup/restore cycle — The smaller the backup, the faster it can be transferred over the network outside the server and back again.

-

You can back up more often — All of the above allows you to do backups regularly.

Let’s check out the ways to reduce the backup size.

1. Compression

A database backup created by the BACKUP DATABASE command can be very well compressed. For SQL Server, there are two ways to compress data: compress on the fly or compress the finished backup file with a compression utility.

On-the-fly compression

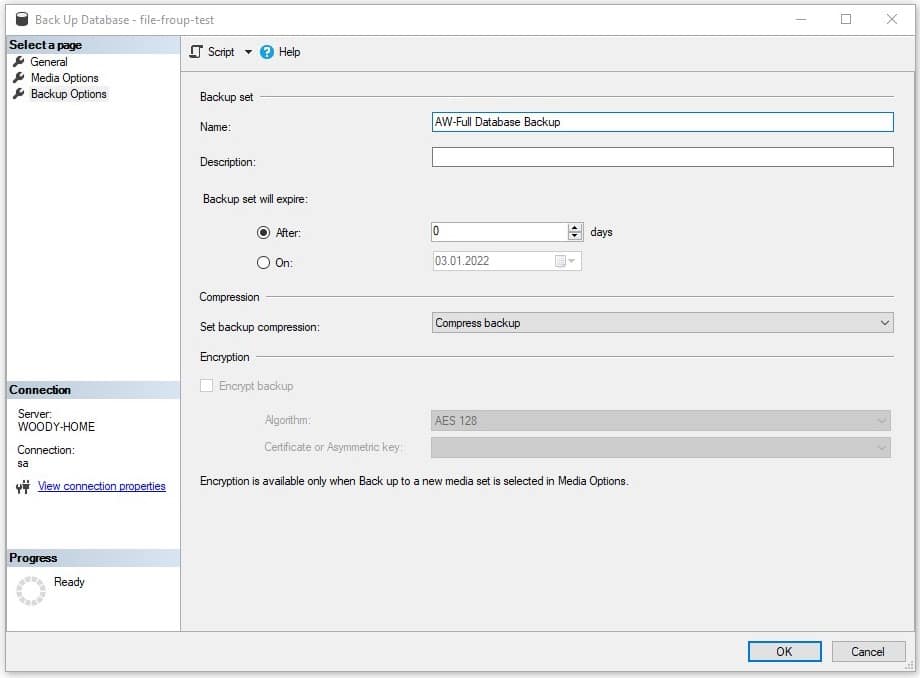

In SSMS, you can set the compression level when creating a backup:

Or you can specify the COMPRESSION option when running the T-SQL backup command:

BACKUP DATABASE [dbName] TO DISK = N'W:\dbName.bak' WITH COMPRESSION

As a result of performing a backup with the “COMPRESSION” option, you will get a backup that is already compressed.

On-the-fly compression has two essential advantages. First, it creates a backup that is already compressed, so you don’t need an intermediate free space for an uncompressed backup. Second, creating a backup that is already compressed is faster than two separate operations (making a backup then compressing the backup).

However, this method has disadvantages too. On-the-fly backup compression creates a high CPU load, and you can’t specify the compression ratio and compression process priority. In addition, if your server is running at full capacity, on-the-fly backup compression may cause serious delays on your SQL Server.

Compressing with a utility

After creating a backup, it can be compressed separately. The best way to do this is with the 7zip utility. The 7zip utility can be more finely tuned to the needs of the server. For example, if you want to use fast compression with 12 threads, then use this command:

"C:\Program Files\7-Zip\7z.exe" a -mx=1 -mmt=12 w:\backup.7z w:\backup.bak

The main advantage of compressing with 7zip is that you can fine-tune the compression process. For example, specify the number of compression threads, compression ratio, etc.

Useful parameters for 7zip

-m0=lzma — forced use of lzma compression algorithm

-mmt=x — where x is the number of threads. The more threads, the greater the load on the system.

-mx=x — where x is the compression ratio from 1 to 9. The lower the compression ratio, the faster it is done.

Unlike on-the-fly compression, you might need more free disk space if you use a compression utility because you need to store both compressed and uncompressed backups at the same time.

2. Differential and Transaction Log Backups

An incremental backup is a backup that contains only the data that has changed since the previous backup was created. However, to restore data from this backup, you need to restore the backup itself and all the data that preceded it.

Incremental backups are smaller and faster. SQL Server can make two types of incremental backups, Differential backup, and Transaction Log backup.

Differential

A differential backup contains all data that has changed since the last full backup was created. Therefore, before making a Differential backup, you need to create a Full backup.

BACKUP DATABASE [test-db] TO DISK = 'w:\full.bak'

Now you can create a Differential backup:

BACKUP DATABASE [test-db] TO DISK = 'w:\diff-1.bak' WITH DIFFERENTIAL

You can restore a Differential backup with the following command:

RESTORE DATABASE [test-db] FROM DISK = 'w:\full.bak' WITH NORECOVERY, REPLACE RESTORE DATABASE [test-db] FROM DISK = 'w:\diff-1.bak'

Differential backups take up little space and are made quickly. But as changes accumulate in the database, the size and speed of making a full backup will increase. So, to keep a diff backup from growing in size, you should create a full backup from time to time. For example, you can make a daily diff backup and a full backup once a week.

Transaction log

Transaction log backup contains all data that has changed since any last backup, including another transaction log backup. This way, you can create chains of very long backups, but the first one in the chain is a full backup.

BACKUP DATABASE [test-db] TO DISK = 'w:\full.bak'

Now you can start creating Transaction Log backups.

BACKUP LOG [test-db] TO DISK = 'w:\log1.bak'

BACKUP LOG [test-db] TO DISK = 'w:\log2.bak'

To restore the last backup you must first restore all the previous ones.

RESTORE DATABASE [test-db] FROM DISK = 'w:\full.bak' WITH NORECOVERY, REPLACE RESTORE LOG [test-db] FROM DISK = 'w:\log-1.bak' WITH NORECOVERY RESTORE LOG [test-db] FROM DISK = 'w:\log-2.bak'

Since a transaction log backup keeps all changes made since the last transaction log backup, you don’t need to create a full backup from time to time. However, a chain of transaction log backups can be extensive.

This is a very powerful tool, and you can do backups very often, for example, every 15 minutes, and full backups once a day.

The difficulty is that you need all the backup logs plus a full backup to restore. In addition, it’s easy to get confused with so many files. In this case, you can use SqlBak, which remembers which backups it did and allows you to restore the database in one click without having to do anything else.

3. Don’t keep everything in one database

As a rule, different data have different requirements for security and duration of storage. For example, user logs can generally not be backed up, while data related to financial transactions must be backed up as often as possible.

Consider moving some large directories to a separate database on the same SQL Server instance. The same goes for various logs.

Cross-database queries have no performance penalty, and your queries will also be fast since a single query scheduler handles them. If no transactional consistency is required between the data and no foreign key checks are necessary, you can move the data to two different databases.

4. Backing up in multiple files

If you run into a problem that there is not enough space to create a backup, but you have several disks, you can create a backup in multiple files, each of which will be located on its own disk.

For example, this is how to divide a backup file between the W and Z drives

BACKUP DATABASE [My-Database] TO DISK = 'W:\My-Database-backup-1.bak', DISK = 'Z:\My-Database-backup-1.bak'

Restoring a backup from two files is also easy

RESTORE DATABASE [My-Database] FROM DISK = N'W:\My-Database-backup-1.bak', DISK = N'Z:\My-Database-backup-1.bak'

It won’t reduce the total size of a backup copy, but if you are looking for a way to split the backup into smaller pieces due to space limitations then this method is for you.

5. Logical backup

When you create a classic physical SQL Server backup, the database files are copied to the backup file. However, the database files contain data and indexes that can take up a lot of space. You can bypass this limitation by making a Logical backup.

Unlike a physical backup, a Logical backup is not a copy of database files but a set of SQL definitions for creating database objects (tables, views, user procedures) and a set of SQL commands for filling tables with data.

The raw Logical backup is very redundant. However, it often wins in size over the compressed physical backup (once compressed).

If you make a logical backup of the active database, it is recommended to take a snapshot of the database and make a backup based on that snapshot. This will ensure the transactional consistency of the data.

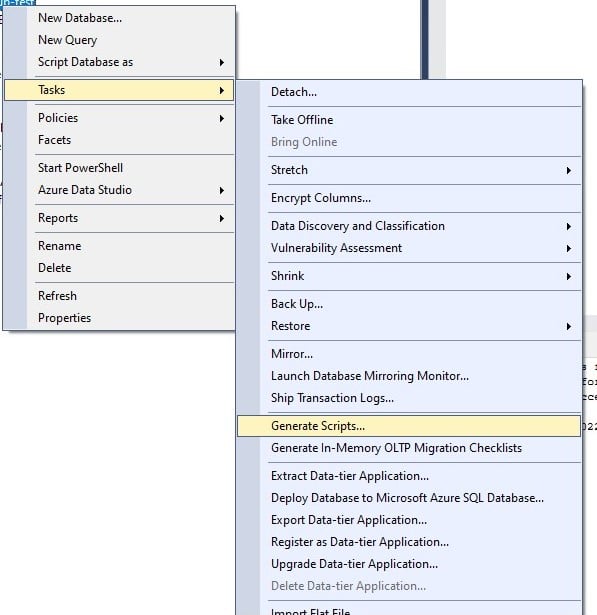

To make a Logical Database Backup via SSMS, select the database, click Task, and then Generate Scripts.

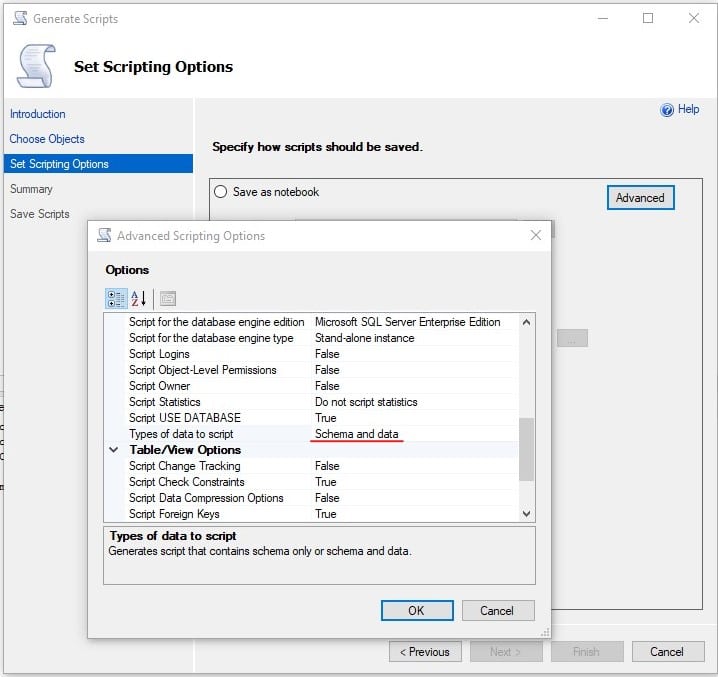

A wizard will appear in which you will need to select the path to the backup file and which objects should be saved to the file. At the third step of the wizard, go to Advanced Option and specify the value of “Types of data to script” = Schema and data.

The output will be a file containing T-SQL instructions for creating a database and filling it with data.

The best way to restore this file is to use the sqlcmd utility installed with SQL Server.

sqlcmd -S myServer\instanceName -i C:\backup.sql

A compressed Logical backup usually takes less space than a compressed full physical backup. In addition, you can restore only some tables, but not the whole logical backup, although manual manipulations with the backup file will be needed. Also, logical backup can be restored on the SQL Server version lower than the original database version.

However, logical backup does not support incremental backups and puts quite a strain on the database server, and its creation and restoration take a long time. Therefore, you shouldn’t use it as a primary backup, but if you need to store your backup for an extended period, you’ll be fine.

Bottom line

The first four methods can be combined together. Think about the architecture of your database and place things that don’t need to be stored for a long time or backed up often into separate databases. Use Differential and Transaction Log backups. You can do them much more often, then compress them with 7zip. This will allow you to backup very often and store them for a long time. To simplify backup and restore management, you can use SqlBak, which will make a backup for you and, if needed, will find everything you need to be restored in just one click.

If you need to create a backup for long-term storage, consider making a Logical backup, for example, once a year. Long-term backups are usually not required for emergency recovery but auditing and reconciliation purposes. Data in a Logical backup can be viewed with a text editor. Also, you can restore only a part of data from a Logical backup.

And most importantly, any backup scenario should be tested in a test environment. Do not forget about this.